|

I am still working to transcribe a mediocre to poor quality tape. I am very glad that I took notes - something I have not done much of in the past during the few in person interviews I completed - because I found myself focusing on the words of this interview as I wrote. I actually had been afraid that taking notes might diminish my focus but I think it worked in some ways to enhance it. Part of the purpose of taking notes was to help guide my follow up questions, so for that reason it was also effective.

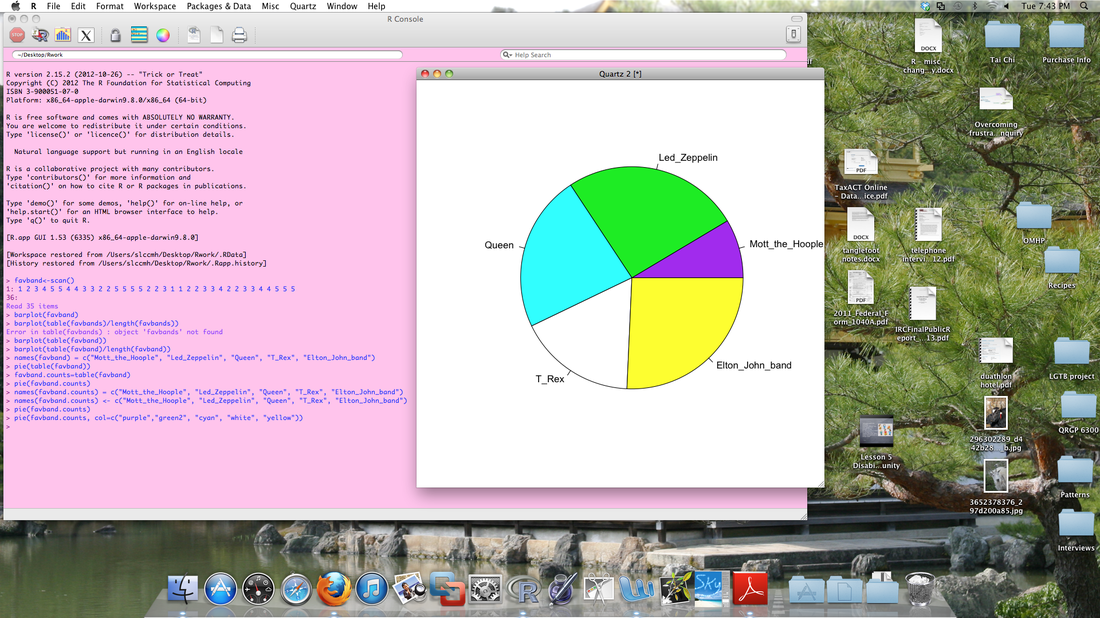

The picture above is a screenshot of the software program/language R.

4 Comments

I have begun the process of transcribing my class project interview. I originally planned to use Transana - a new program for me.

It is not, in my opinion, extremely intuitive, and weeding through the directions and the demo took some time. I can see why so many electronic devices come with 'quick start' guides because people just want to be able to use something! I did find a quick start guide of sorts online although by that time I had already worked out how to start transcribing. ...playing with the GoAnimate website. This very primitive effort was put together 'from scratch' by me. The 'tea' lines are a nod to the late Douglas Adams's "Hitchhikers' guide to the galaxy" books. (And, of course, 'the computer has been drinking' line was inspired by Tom Waits's song from long, long ago called "The piano has been drinking")

This is a picture of one of the two Olympus voice recorders I own and had prepared for the telephone interviews today. I tested both recorders yesterday and consulted the manuals to be certain I was comfortable with the operating instructions. I charged one yesterday afternoon and the second one today. What I failed to do was a test of the ear pickup for telephone recording. I used it successfully many, many times between January and February of 2012, and it turned out good quality recordings over and over. When I started the call, I took a look at the levels, and seeing them move, I was confident everything was working. (Of course, no one writes a set up like this and ends it by saying...."and, I was right - everything went perfectly well!") Since I went to bed yesterday still thinking about constructionism/constructivism, and I read a summary of the methods section in a mixed methods research paper late yesterday, it is no surprise that these things are still on my mind this morning.

I am almost finished with Bryman's "Social Research Methods" (4th ed.) (Sage, 2012) and one of the first things he mentions in the chapter about conversation analysis is 'anti-realism.' He also aligns the approach with constructionism (not construtivism!). Some of the common things I see in qualitative methods descriptions (including the one I read late yesterday) are mentions of 'triangulation.' Less often, I see the term 'validity' used. In the report I just read, 'member-checking' (sending the transcripts back to the co-researchers) was used, as was consensus coding, plus another thing that escapes me now. I have thought a lot recently about consensus coding and have started to question the motive. When coming from a constructionist standpoint, what does consensus in coding 'prove?' (That two researchers agree on someone else's interpretation, even if it means that they need to compromise to do so?) I am not certain I see that this improves the validity - is it not possibly likely to point to some diluted version of the co-researcher's interpretation? Since I am not looking for the 'truth,' why would I be so worried about this type of agreement? It seems to me that using different methods to triangulate makes far more sense - because you can examine the consistencies between what the co-researcher says and does, or says and writes (depending on your different methods). As far as validity goes, I think we are always working somewhat in the near dark on that - what I write, even if it is merely a transcript - it is inevitably going to reflect my interpretation of the co-researcher's interpretation (IPA keeps emerging for me) of his or her experiences. Does it matter whether I am representing the construct of interest more than that I am representing how the co-researcher views this construct? What if he/she is way off base when compared to the usual and expected definitions of manifestations of some theoretical construct? I do think there is some merit to checking coding for completeness, and having some discussions where another researcher or co-researcher might question a code, but I do not see that much merit in striving for a percentage of agreement (a la 'interrater reliability'). I read some research not that long ago that suggested that the outcome of disagreements is very likely compromise - which to me would represent either dilution, as I mentioned above, or even alteration of the co-researcher's views. If I am that concerned about 'accuracy,' I might as well use a survey. I know that these issues have been discussed within my many readings but I think that the questions really only begin to make sense as I encounter them (or anticipate them) in my actual research. So, like with a lot of things, the more you do, the less you know. My self interview is about 18 minutes long. I said some interesting things to myself and I think it is probably worth keeping for at least a short time to listen to again. In fact, comparing it with my classmate interview tomorrow might be interesting.

Created on GoAnimate with a GoPlus membership. The scene is a template scene; standard characters and voices were used. This is version #2 - version #1 referred to a 'social constructivist' rather than 'social constructionist' approach. I am still trying to work my way through the distinction although Alan Bryman in "Social Research Methods" (4th ed.) (Sage, 2012) states that the former is an alternate term for the latter. I felt more comfortable using 'constructionist' since it is Bryman's choice for general use within his text.

My class interview is scheduled for tomorrow a.m. and in preparation, I did a practice interview with myself this morning.

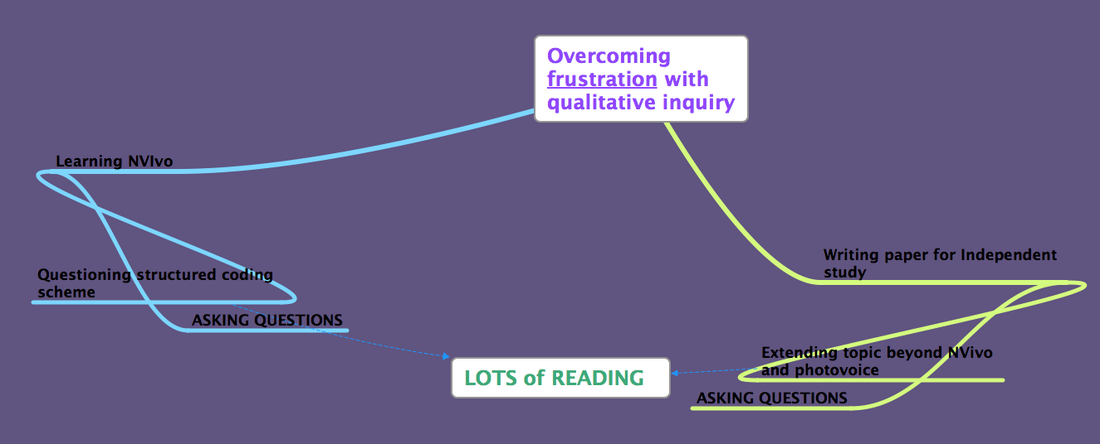

This procedure was inspired by Dr. Chenail's article "Interviewing the investigator: Strategies for addressing instrumentation and researcher bias concerns in qualitative research" (The Qualitative Report, Jan 2011; http://www.nova.edu/ssss/QR/QR16-1/interviewing.pdf) This slide was taken from a presentation I gave to my graduate seminar class. My purpose was to present some of Johnny Saldaña’s information from TQR 2013 about using MS Word for coding since we have no site licenses to CAQDAS here at Ole Miss. Given the fact most of the students attending the seminar have no prior qualitative training, I thought that preparing and presenting an overview of the process of qualitative inquiry would be appropriate. I leaned very heavily on Creswell’s “Qualitative inquiry and research design: Choosing among five approaches” (3rd ed., Sage) for the overview although the graphic above represents the way I feel part of the time - what happens inside the box???

One of the important considerations for this interview assignment is what technology to use. This is a distance interview, so technology is going to be necessary. One of the alternatives is to use the 'practice Elluminite classroom' in the Blackboard online learning program. Pluses include that it has its own recording capability and is familiar and accessible to the students in the class. Negatives I see have to do with sound - there is feedback at times, or echo, and I have noticed from the beginning that every so often the sound 'cuts out' briefly - for maybe a second or less. I do not know if that is captured on the recordings; I only ever missed one live session so I am not in the regular habit of listening to the tapes.

|

AuthorI am Sheryl L. Chatfield, Ph.D, C.T.R.S. I am a member of the faculty in the College of Public Health at Kent State University. I also Co-coordinate the Graduate Certificate in Qualitative Research and I am a member of the Design Innovation Team at Kent State. Archives

February 2024

Categories

|

RSS Feed

RSS Feed