|

I used to imagine putting together a presentation where a person and a computer program competed to see which could perform better at qualitative coding. There were a lot of problems with this including the need to define "perform better." But this idea was motivated in part by learning about some of the auto coding functions. I have never used any of these functions in a qualitative software program but assume they are based on some version of a "keyword in context" code and search process (that can be done with basic programs like AntConc. My presentation was also motivated by an old folk tale and/or folk song John Henry about a man who competed against a machine. The man won, sort of, although he also passed away from the exertion, in most versions of this story. Wikipedia (follow my link) suggests instead he died from dust inhalation, which sadly, seems more realistic although is less dramatic.

The thing that made me think about all of this this was the KFC promotion that I read about in the news last week. The link I used actually says "KFC blames its bot" for an inappropriate product promotion in conjunction with Kristallnacht - an anniversary having to do with Holocaust related events. Clearly, I thought, when I saw the first report, KFC has relied on some type of algorithm to search out holidays throughout the countries where they have locations, so social media posts can be generated automatically. As an aside, this makes me consider how impressive it probably was for the first people who received customized form letters with their name(s) and other details, sometime around the early days of word processers in the 1980s. My parents certainly got letters like this: "Dear Robert and Bonnie: Have you wondered how to improve the quality of your lawn where you live at 3678 Bryan Road, Obetz, Ohio...." (these are all approximations of real names and places.) I assume the fonts for the placeholder text were all a little bit off. I use a lot of mail merge myself for data management (moving things from Excel to Word) and it is pretty much seamless these days, as long as you are careful in where you put the placeholders. This whole KFC things last week also reminded of a prompt I had in my Amazon account, in the early 2000s.

0 Comments

I participated in the 2022 Kent Stage "Ghost Walk" on Saturday evening. This year, instead of going around downtown Kent, or spooky spots along the Cuyahoga River, the event took place in the Wolcott Lilac Gardens, a local attraction. I had never heard of the gardens but went on a ghost walk a few years ago and heard from areas ghost hunters, so I thought I'd give this another try at a new location.

The walk and stories were enjoyable although it went on a little long and got sort of cold. I thought about this a lot afterward and had vivid dreams. The next day, when I thought again about some of what I saw and heard, I realized I had encountered qualitative and quantitative approaches to ghost hunting. In many ways, I find Stephen King description, with regard to the fictional setting of "The Dark Tower" series, "the world has moved on" to apply to aspects of research. While I acknowledge the interest in Big Data, that is not what I have in mind, now. My current concern is the thing variously referred to as a "literature review" or "review or literature," and its associated variations.

To put it bluntly, I believe reviews of literature are increasingly exercises in process rather than relevant, useful contributions to knowledge. I acknowledge approaches to reviewing (findings from) prior research have been undergoing refinement for years. Meta-analyses and other (quantitative) pooled results reports and meta-syntheses or other (qualitative) integrated results reports have been around for decades. There are systematic reviews, mostly identified by use of a systematic, thorough but not typically exhaustive search and selection process, scoping reviews, characterized by a narrower scope, and even narrative reviews, that tend to be a (preference base? convenience based?) sample of research summaries. There are increasing alternatives in software programs and applications to support these efforts, including general qualitative data analysis software (QDAS) programs, although Microsoft Excel can often be made to do what is done. Conventionally, reviews of prior literature are either used to build rationales for new studies or may comprise free standing reports. As anyone who has used library database programs or database aggregators can attest, the number of hits (AKA articles displayed following a key word or other type of query) has increased exponentially since the turn of the century. People use various criteria to limit the number of sources that have to be screened, including publication date, attributes of the research, such as publication language, study design, participant characteristics, and others. Sometimes these are arbitrary, sometimes they are logical, and sometimes they seem to mostly represent the easiest way to greatly reduce the sample. But I think the biggest limitation to the usefulness or potential interest in any review of literature is reliance on published research, usually peer-reviewed published research. This may make a strange kind of sense since the aim is often to use the results to justify another research study, that will be published in a peer reviewed journal format. I have used this blog to complain about peer reviewers (and to be fair, I also complain about authors and editors) but right now I feel like it is a good time to acknowledge the critical value of the peer review system. There are many points of potential challenge associated with translating, or maybe it is better described as transforming, research activities into an accurate and comprehensible text-based record. I don't know about everyone else but anything I write is likely to be incorrect, awkward, or maybe more generously described as less than ideal as a first draft. If you could see me typing now, you would see it consists largely of stopping, restarting, deleting and inserting words, punctuation, and spaces, pausing to consider and re-read, followed by more writing and more undoing (and redoing) previously deleted content. I catch some things but almost always rely on one or two pre-readers before I submit anything for publication. Still, within peer reviewer comments there are almost always questions or recommendations about structure or grammar, which should be the easy stuff to get right before you submit and still I don't always. But I believe the primary value in peer review is its role to consider and comment on quality of research conduct and associated credibility of results. So it follows that I personally am getting pretty tired of seeing research from pre-prints featured in popular media reports, columns and other types of articles.

It feels to me like authors' responses to peer review have shifted dramatically in the past few months. This may be a temporary thing and may be due to impacts of COVID on instruction and mentoring. But prior to this year, I have seen a very small number of poorly (in my view) prepared responses to reviewers from authors. In the last six months I estimate I have seen more poorly prepared response than in the last several years combined. As with my prior posts - written from the author view - there are two main categories of problems: 1) no organized response to reviewer comments; 2) deciding, arbitrarily, or based on preference, or energy or something else, what to respond to.

While I was searching the archives of the open access journal Forum: Qualitative Social Research, I found that a special issue was devoted to virtual ethnography. This issue was published back in 2007. The focus, reflecting interest and typical usage at the time, seemed to be centered on interest groups. But articles about ethics, and "Digitally mediated identities" caught my interest. I wonder how many downloads there were of these papers during the past 2-3 years. I'm chastising myself a little for not looking for something like this before -in my opinion, this journal has been a trendsetter with special issues for as long as I have been aware of it.

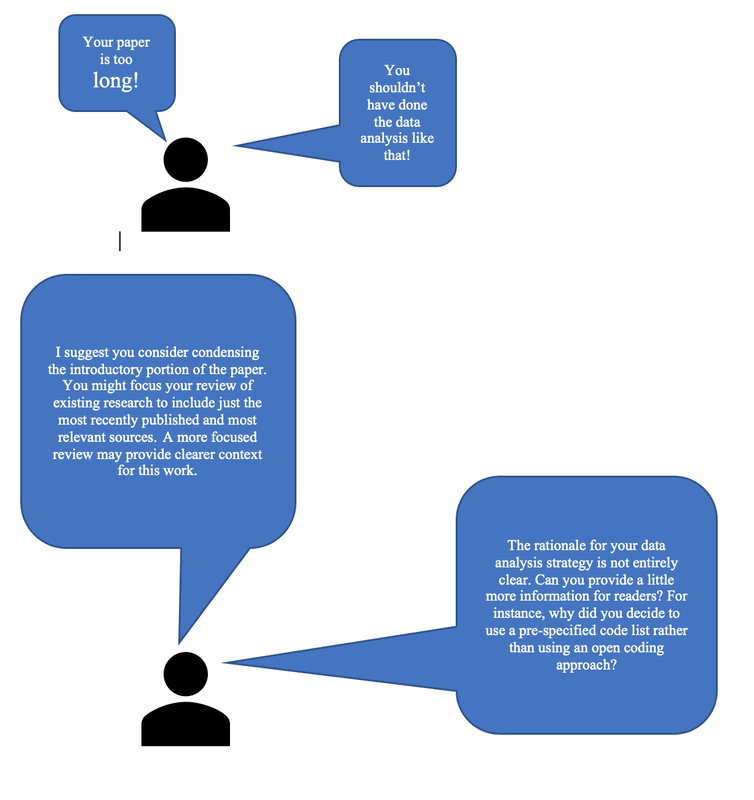

To be honest, the title of the last post was not really accurate. I guess I got carried away with the idea of making an engaging, even grabby headline! But it is probably accurate to say that most challenges have to do with the two identified categories - those being authors and reviewers/editors. I was complaining about peer review one time with some co-presenters, just before a virtual conference session began. Professor Sally Campbell Galman, the brilliant author of the graphic research comic: Shane the Lone Ethnographer, used the terms "actionable" and "not actionable" for what I was calling with less concision "comments you don't know what to do about."

In my little graphic above, I have included a classic non actionable comment from a peer reviewer: "Your paper is too long!" In fact, my graphic shows a couple of non actionable comments and then some (admittedly wordy - but this is my style as a reviewer or editor) versions that provide some additional guidance. There are, as with most things, pluses and minuses associated with providing detailed comments. In a virtual or physical room full of qualitative researchers, a frequent topic of conversation is the difficulty of publishing qualitative research reports. Although there are many types or sources of difficulty, I think most can be allocated to one of two categories - problems associated with authors and problems associated with peer reviewers. (Problems associated with editors may only be solved by waiting for a new journal editor to take over.)

Over the next few weeks, I aim to post some of my thoughts about specific challenges associated with authors and/or peer reviewers. I'll start with one of each today. No matter how much care and thought went into a research project, authors often have a tendency to assume reviewers are correct. Another version of this is the belief that it doesn't matter if reviewers are correct or not - authors still need to follow their guidance. Sometimes when a reviewer may offers advice based on preference rather than scholarly or methodological guidelines, and the thing in question is relatively minor, it may not be worth an argument. I have put things in tables when my preference was not to do so, and duplicated information in tables and text, again, when my preference was not to do so. In my opinion, things like this do not substantially change my report. But if a reviewer provides guidance or makes a demand that is unsupported and/or inconsistent with the method, I believe authors are within their rights to decline (politely, with an explanation) to make a change to their work. One common reviewer trap - that may impact newer reviewers, including graduate students, more so than reviewers with extensive experience - is to assume it is necessary to criticize something in every manuscript. I have reviewed many papers during the time period between March 2020 and the present. (And been very frustrated by slow, poor, and no reviews of my own submissions!) Most of the journals I do work for adhere to the APA/ American Psychological Association style of formatting and paper organization.

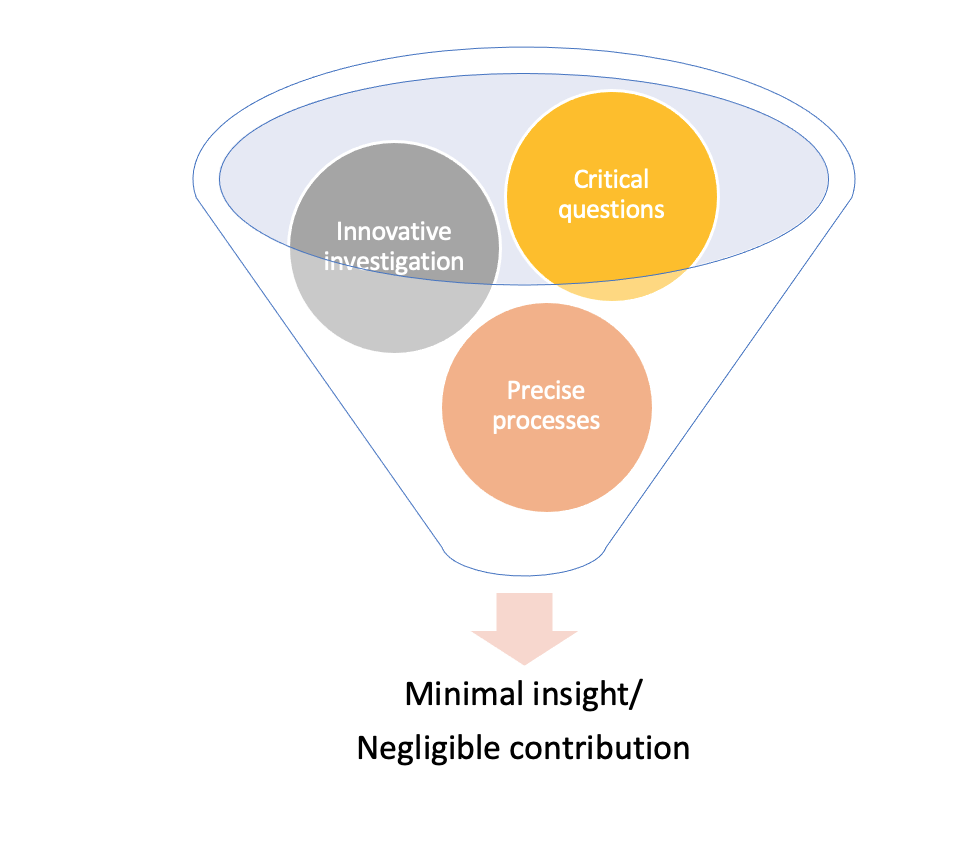

And this is not meant to be a post in praise of or expressing criticism of that particular style. Because it is widely-used in social and human sciences, it is the style I have most familiarity with - going back to the 5th edition. I will say I like some of the changes associated with the 7th edition, especially getting rid of the very confusing rule about how many authors to show in an in text citation. I have seen probably a dozen papers now where authors provide a summary of the entire paper as the opening of the paper. This is separate from, and follows the abstract, and basically looks to me like a summary of each section - a few sentences that summarize the intro/review of lit, a few sentences that summarize the methods, a summary of the findings (hence the title of this post) and a summary of the implications. The style in which this is presented often looks to me more like a philosophical or other humanities paper - "In this work, we illustrate....." So I went back to my APA guide to see if this is something new - is there some direction to add an extended summary over and above the abstract? It has been a long, hard year and my motivation to post here or contribute in a meaningful way to any scholarly discussions has been low, and is just now returning. Since spring of 2020, I have been alternately frustrated and encouraged by my involvement in academic publishing, although I think times of the former far outweigh the latter. I am about to begin a new role as Editor-in-Chief of the Ohio Journal of Public Health, so I continue to consider how I might be part of the solution rather than contributing to (what I perceive as) ongoing problems. I have read a couple of discouraging reports in popular media recently about authors' struggles to publish their academic works in scholarly journals. It is easy to assume the works themselves are the problem. And it is also unpleasant to express a defensive attitude about one's own work - which is often what is actually communicated when authors rant to their colleagues about crappy editors and cruel reviewers. But in the instance of the things I read about, it seems that political, personal preference, and even ego-based motives might have been involved. I increasingly fear there is a great deal of interest in preserving the current state of knowledge and in squashing attempts at methodological innovation. I blame aging, set-in-their-ways academics but certainly that is an easy target and an oversimplified explanation. I know myself that it is easy enough to get caught up in your own sense of self-importance and self-image as an expert and it is difficult to confront and accept your own areas of weakness, ignorance, or at times irrational preference. I gave someone poor guidance recently, and was mildly offended by being questioned in the first place. I took some pleasure in acknowledging I was wrong and hope to be a little more willing to really listen in the future before I assume I know more due to my extensive experience (and expensive education - and I'm talking about a public university, by the way), etc., etc. I made this little graphic in Microsoft SmartArt as I was thinking about how peer review (which is not labeled, but represented by the filter shape) can extract the creative, engaging, and even innovative segments of research reports, resulting in a diluted product that is of little interest and offers little that is new. This seems like it should be more prevalent in positivist*/postpositivist* leaning fields like health and health sciences although ironically I have heard from colleagues in interpretive/qualitative-friendly fields that there are likewise narrow minded approaches to how critical** inquiry should be done. I am reminded of Kuhn (The Structure of Scientific Revolutions, 1962/2012, U of Chicago) and his assertion that science does not move forward incrementally but instead the status quo is displaced and replaced (OK, very over simplified). Consider this excerpt from Kuhn, however, in light of the general direction of this post: "The scientific enterprise as a whole does from time to time prove useful, open up new territory, display order, and test long-accepted belief. Nevertheless, the individual engaged on a normal research problem is almost never doing any one of these things (p. 38, emphasis in original).

*I am actually referring more to concrete thinkers, rule-loving scholars and those who always color within the lines. These people are found conducting qualitative, quantitative, and mixed methods inquiry. They can often be identified by frequent use of peer review comments that begin with "Always," "Never," "You must," "You cannot," etc. **I use this term in a research context almost always to mean people who question current structures, conventions, rules, and beliefs. Some use the term "trouble" in a similar way. |

AuthorI am Sheryl L. Chatfield, Ph.D, C.T.R.S. I am a member of the faculty in the College of Public Health at Kent State University. I also Co-coordinate the Graduate Certificate in Qualitative Research and I am a member of the Design Innovation Team at Kent State. Archives

February 2024

Categories

|

RSS Feed

RSS Feed